As generative AI tools become more powerful - scoring gold medals in the Math Olympiad, solving seemingly unsolvable problems like protein folding - I've come to the following conclusion:

We already have Artificial General Intelligence.

This isn't just provocation. Let me explain.

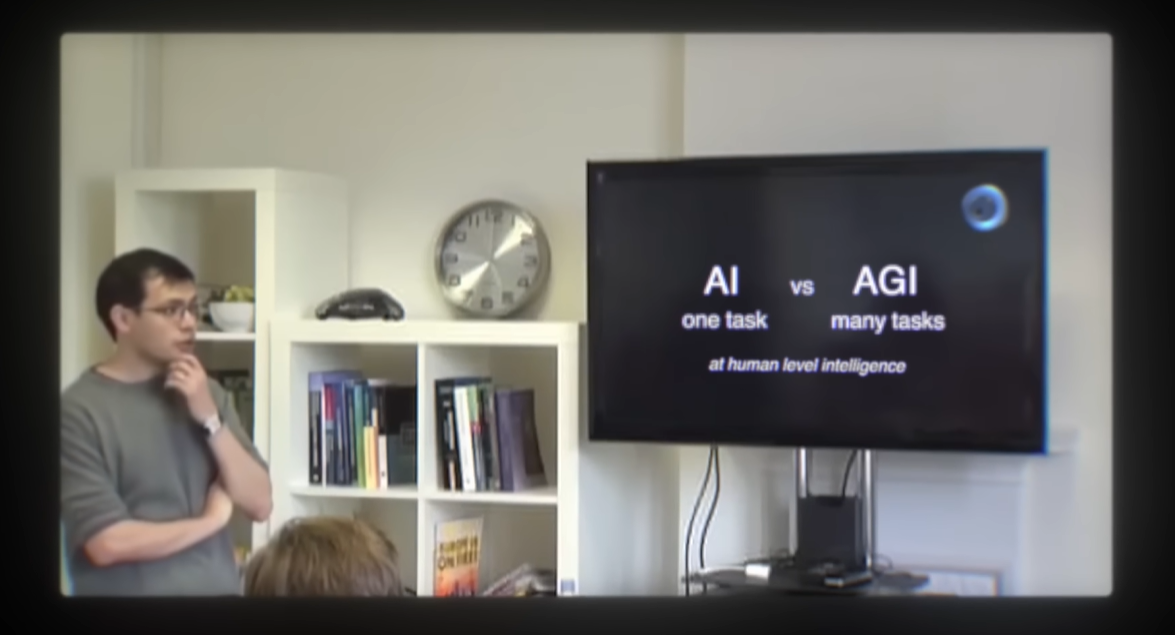

While watching The Thinking Game - the documentary about the rise of Demis Hassabis and DeepMind (which I highly recommend - you can find it on my AI Picks page) - I was struck by this image. It's Demis in the early days of Google DeepMind in London, comparing AI to AGI.

His definition was simple:

- AI: Human level intelligence at one task

- AGI: Human level intelligence at many tasks

Notice what he did not say. He didn't say "all economically valuable tasks." He didn't say "a majority of tasks." He said many tasks.

This was Demis Hassabis - arguably the #1 AI researcher in the world, now a Nobel Prize winner - articulating to DeepMind (the best AI research team ever assembled) his own definition of AGI. This was before the hype explosion, before the corporate stakes, before AGI became a contractual trigger between Microsoft and OpenAI to be determined by third-party experts.

By his original definition? We've smashed through AGI and barely noticed.

The Evidence Is in Our Daily Lives

Never mind the scientific breakthroughs AI has already enabled. Never mind the creative applications - music generation, image generation, video generation, the transformation of social media. Those are breathtaking in their own right.

But just from my own workflow this week:

- Teaching: Generative AI helped me prepare a lesson for my graduate-level MBA class

- Cooking: It helped me prepare and cook a prime rib over Thanksgiving week - a tradition in my family where we cook beef instead of turkey (although we did have some turkey because we needed to make gravy)

- Negotiation: My wife used it to help negotiate a new salary for a job she's starting at an international startup with complex salary and compensation implications

Three wildly different tasks. Human-level intelligence - or better - at each one.

That's not narrow AI. That's not "one task." That's AGI by the definition the field's leading researcher gave his own team.

The Goalposts Moved Without Us Noticing

Sam Altman has noted how we essentially blew past the Turing test and it was barely in the news. He's predicted we'll reach AGI someday and it'll be similar - we'll freak out for about a week, then move on, and the world will continue.

"I know there's like a quibble on what the Turing test literally is, but the popular conception of the Turing test sort of went whooshing by. Yeah, it was fast. You know, it was just like we talked about it as this most important test of AI for a long time. It seemed impossibly far away. Then all of a sudden it was passed. The world freaked out for like a week, two weeks, and then it's like, 'All right, I guess computers can do that now.' And everything just went on."

— Sam Altman

I think we've already passed that point.

When companies and researchers talk about AGI now, they're really describing what should be called ASI - Artificial Super Intelligence. The bar where every single economically valuable task that could be done by software or robot, is. We're not even close to that.

But AGI as originally conceived? Human-level intelligence at many tasks?

We're way past it.

Update: Another Perspective

Ilya Sutskever - co-founder of OpenAI and a true peer to someone like Demis Hassabis - offers another angle on why "AGI" has always been a flawed term. In a conversation with Dwarkesh Patel, he explains that AGI isn't some profound milestone we're racing toward. It's simply a reactionary label - a response to "narrow AI" that meant nothing more than "not narrow."

"The term AGI - why does this term exist? The reason the term AGI exists is, in my opinion, not so much because it's a very important, essential descriptor of some end state of intelligence, but because it is a reaction to a different term that existed. The term is 'narrow AI.' If you go back to ancient history - game-playing AI, chess AI, computer games AI - everyone would say, 'Look at this narrow intelligence. Sure, the chess AI can beat Kasparov, but it can't do anything else. It is so narrow - artificial narrow intelligence.'

So in response, as a reaction to this, some people said, 'Well, this is not good. It is so narrow. What we need is general AI - an AI that can just do all the things.' And that term just got a lot of traction."

— Ilya Sutskever

By this framing, AGI was never a well-defined destination - it was just "anything other than narrow." And by that measure, we crossed the line a while ago. What we're really talking about when we discuss some future "AGI moment" is superintelligence.

Update: The Shifting Goalposts

December 17, 2025

Even among researchers actively working to define and measure AGI, there's an acknowledgment that the goalposts keep moving. In a recent interview at NeurIPS 2025, Diana Hu (Partner at Y Combinator) asked Greg Kamradt (President of the ARC Prize Foundation) what the world should conclude if a model scored 100% on their ARC-AGI benchmarks - the very benchmarks designed to test for general intelligence.

His answer proves my point:

Diana Hu: Let's wave a magic wand. Suppose a super-amazing team suddenly launches a model tomorrow that scores 100% on the ARC-AGI benchmarks. What should the world update about its priors of what AGI is? How would the world change?

Greg Kamradt: It's funny you ask that. The question of what AGI actually is goes very deep, and we could spend much more time on it. From the beginning, François has always said that the thing that solves ARC-AGI is necessary for AGI, but not sufficient.

What that means is that the system that solves ARC-AGI 1 and 2 would not itself be AGI, but it would be an authoritative demonstration of generalization.

Our claim for V3 is similar: the system that beats it still won't be AGI. However, it would be the strongest evidence we've seen to date that a system can genuinely generalize.

If a team came out tomorrow and beat it, we'd obviously want to analyze that system and understand where the remaining failure points are. Like any good benchmark creator, we want to continue guiding the world toward what we believe proper AGI should look like.

— Diana Hu & Greg Kamradt, "How Intelligent Is AI, Really?" at NeurIPS 2025

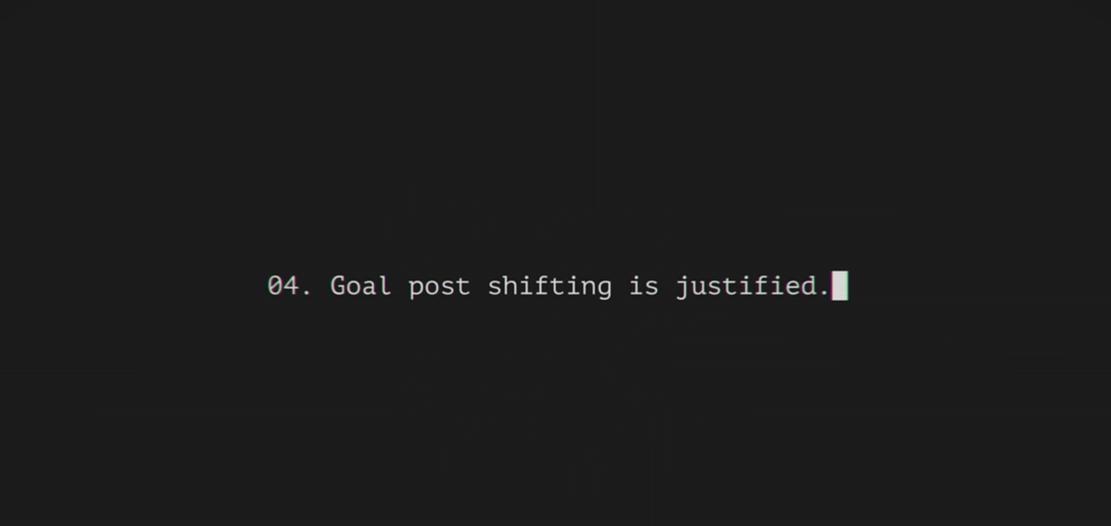

Did you catch that? Even if a model completely surpassed the ARC-AGI benchmarks - the very tests designed to identify AGI - they would simply use that as a foundation to create new benchmarks. The system "still won't be AGI." They want to "continue guiding the world toward what we believe proper AGI should look like."

This constant shifting of the AGI goalposts is confusing people. It's not good for the field of AI or the broader ecosystem of businesses and organizations trying to understand what's happening.

Quite simply: we already have AGI because we have tools that generalize across many tasks and can learn. What we're really after now is superintelligence.

Update: We Need New Goalposts

December 29, 2025

Over the past year I've become a fan of Dwarkesh Patel's speaking and writing. His YouTube channel features an incredible guest list, and his original thinking on AI is refreshing and necessary for those of us who care about these technologies and their impact on the world.

Recently, Dwarkesh posted his thoughts on AI to wrap up 2025. While he has his own hobby horse about the need for continual learning, his discussion touched on my hobby horse: we already have AGI, and there has been non-stop goalpost shifting as these technologies advance.

Most people discussing the definition of AGI are computer scientists and researchers in frontier labs - rightfully approaching that acronym from a perspective rooted in scientific research into machine intelligence. I have no such background. My background is in business strategy.

Unfortunately, what is happening to the field of artificial intelligence has already happened to the field of business strategy. The core idea - the central topic of the entire field - is being twisted and warped by a lack of sufficiently precise language. Strategy is the single most important topic to the success of organizations, yet it's conflated with "planning" so often that the word has almost lost all meaning. (For an excellent discussion of what strategy is versus what it is not, see Roger Martin's viral video on business strategy.)

The same thing is happening to Artificial General Intelligence.

"Now you might be like, look, how can the standard have suddenly become labs have to earn tens of trillions of dollars of revenue a year, right? Until recently, people were saying: can these models reason? Do these models have common sense? Are they just doing pattern recognition?

And obviously, AI bulls are right to criticize AI bears for repeatedly moving the goalposts, and this is very often fair. It's easy to underestimate the progress that AI has made over the last decade. But some amount of goalpost shifting is actually justified. If you showed me Gemini 3 in 2020, I would have been certain that it could automate half of knowledge work.

And so we keep solving what we thought were the sufficient bottlenecks to AGI. We have models that have general understanding. They have few-shot learning. They have reasoning. And yet we still don't have AGI.

So what is a rational response to observing this? I think it's totally reasonable to look at this and say, 'Oh, actually there's much more to intelligence and labor than I previously realized.' And while we're really close, and in many ways have surpassed what I would have previously defined as AGI in the past, the fact that model companies are not making the trillions of dollars in revenue that would be implied by AGI clearly reveals that my previous definition of AGI was too narrow."

— Dwarkesh Patel, "2025 Year in Review"

Dwarkesh makes it clear: the goalposts are indeed shifting. If you had handed him Gemini 3 five years ago, he would have thought for sure that knowledge work was being imminently replaced - that we had achieved AGI. While he would have been incorrect about knowledge work being replaced in totality, he is correct that by his previous standards, we have already achieved AGI.

The correct way forward is not to continue moving the goalposts.

The correct way forward is to recognize that we have already achieved an intelligence - via machines - that generalizes across tens of thousands of use cases. We do not need to redefine AGI as these models continue to advance. We need to accept that we already have an intelligence that is no longer narrow, that generalizes across many economically useful domains, and is wildly powerful as-is.

Then we need to generate new language for the new levels of intelligence that remain ahead.

One day a superintelligence will arrive that can perform all economically viable tasks. We need a new way to discuss those thresholds of capability - without confusing the general public or nitpicking with other AI enthusiasts and scholars about a term that has already been satisfied.

Update: The Terminology Is Breaking Down

January 11, 2026

The AI field would benefit from a simpler, settled understanding of what AGI means. We already have AI tools that generalize exceptionally well across countless use cases - which should satisfy a layman's understanding of "artificial general intelligence." Now that the term has crossed into popular culture, it's better to anchor on this basic definition and develop refined terminology for the more technical thresholds researchers are actually pursuing.

Consider a recent conversation between Elon Musk and Peter Diamandis. In rapid succession, Musk made three claims:

"We are in the singularity for sure. We're in the midst of it right now for sure."

— Elon Musk (1:15:24)

"Yeah, I think we'll hit AGI next year in '26."

— Elon Musk (1:16:05)

"I'm confident by 2030 AI will exceed the intelligence of all humans combined."

— Elon Musk (1:16:17)

The singularity refers to a hypothetical point where AI becomes capable of recursive self-improvement, triggering runaway technological growth that fundamentally transforms civilization. In most formulations, the singularity presupposes that AGI has already arrived - it's what happens after general intelligence emerges.

So an informed observer might struggle to reconcile these statements: If we're already in the singularity, how is AGI still a year away? And isn't "exceeding the intelligence of all humans combined" closer to what we'd call superintelligence?

This isn't a criticism of Musk - he's speaking conversationally, not writing a technical paper. But it illustrates exactly why the terminology is failing us. When even well-informed commentators use AGI, singularity, and superintelligence almost interchangeably, it signals that the vocabulary has outgrown its usefulness.